There has been a lot of noise in the SEO community around AI and artificially generated content for some time, and the recent emergence of ChatGPT has added further fuel to the fire.

Within the noise is a debate about whether or not AI content is bad and will be punished by Google. There’s even been an emergence of tools, extensions, and methods to help users detect AI-written content – and it is bemoaned as being bad.

Google, through outlets such as Danny Sullivan/Search Liaison, is maintaining a “focus on the output and not the source” approach. In that bad, spammy content that offers no value and is purely designed to rank is bad regardless if it was written by a human or a robot – and also brings us back to EEAT and user value proposition.

So this brings me to an important question – given the sensitivity of the topic and the fervor it has received – can we actually tell the difference between a piece of content written by a machine and a piece of content written by a human?

To do this, I had one piece of content produced by a writer on Fiverr with 81 five-star reviews, and one generated by ChatGPT.

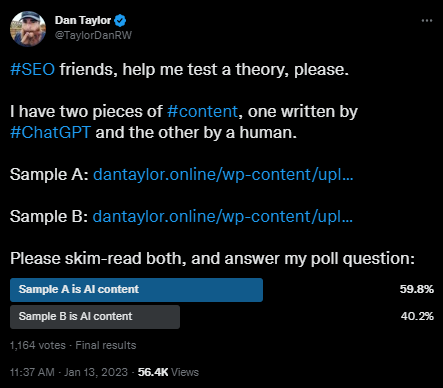

I then put this to Twitter, where 1,164 users contributed to the poll with 59.8% determining sample A was AI-generated, and 40.2% determining that sample B was AI-generated.

I also published the same poll on LinkedIn, which had 92 respondents with a 75/25 split in favor of sample A being AI-generated content.

Is AI content really the death of SEO?

This also helped me choose the topic for the content produced by the AI tool and the human writer. In SEO every new trend seemingly spells the death of our industry, and even in my career, SEO has died a number of times.

So the topic chosen is Death – specifically, Death in Tarot.

The reason I chose this topic, is because Tarot isn’t necessarily a mainstream thing that many people know about – but for those who read and practice Tarot it is a deep and complex subject.

In SEO, especially being agency side, we pivot between a multitude of different topics when writing content for clients and not all content is inherently written by those who have researched the topic areas adequately.

Test methodology

For this test, I took the article topic What does the death card mean in tarot? and briefed a human writer with 80+ 5-star reviews for content on Fiverr, and then to ChatGPT.

Sample A: https://dantaylor.online/wp-content/uploads/2023/01/202301-content-v-ai-content-sample-A.pdf

Sample B: https://dantaylor.online/wp-content/uploads/2023/01/202301-content-v-ai-content-sample-B.pdf

I didn’t expand too much or provide article structures or subheadings. I wanted to see the depth of the content produced by both actors.

This is something I’ve then checked by a professional tarot reader, and then during the experiment, an SEO professional also invested in Tarot and provided additional feedback.

Respondent thoughts

During the polls, a number of respondents commented their thoughts, opinions, and how they derived the answer that they came too.

Below are a selection of their comments:

- I read both all the way through and far prefer B. It was easy to read and lead me through the subject naturally. A reads OK but I found its construction less palatable (I had to work harder to concentrate and absorb what it was telling me) and it included a few strange words that made me wonder!

- It’s interesting. Sample B was much easier to read, like having a friend talk to you. Sample A sounded like a textbook to me. However, I noticed a double space in an odd place in sample A, which makes me question if this text was really written by AI.

- A reads less like a human wrote it (based on my 30 seconds of skimming through both). The content is less engaging, doesn’t ask anything from the reader, and is over-reliant on describing as much detail as possible.

- Sample B is much easier to read than Sample A. And AI texts are generally written in very simple terms (like a 6-year-old could understand them), unless otherwise requested. Besides, the transition sentences in sample B are very heavy, which gives me a feeling of IA: “But one thing is for sure”, “However, it’s important to remember”, “It’s important to note”, “More simply put”, “With this is mind”, “Now that you have a better understanding”, “The first thing to keep in mind”, etc.

- I picked B as AI because it is more consistently written in the second person, where the first one alternates more between second and third. Guessing AI prevents itself from doing that. But I could be wrong – maybe you are more diligent and AI doesn’t care.

- A reads more like AI, so I picked A. B ended with conclusion which is an AI thing, but A was verdict, which confused me more, but I still voted A. After voting I looked at it one more time, & if there’s anything I’m sure of, ChatGPT does not give that many em hyphens as seen in B

- Sample B seems to be Human written as it have a convincing type of word choice in my opinion. Sample have more of informational side into it. I think the use of ai written content in informational article is pretty much OK, you don’t have to research alot+ get workable content.

How good is the content from a Tarot Reader’s perspective?

As this is a topic area I am not an expert in, as well as understanding if users could determine AI-generated content versus human-written content, I wanted to know if the content by both actors was accurate.

This was their feedback:

(A) was very very in-depth and offered a variety of ways to perceive the death energy in the Tarot. The second one, (B) was more on the mediocre side but still appropriate. I could never get the sense that either one of them was written by A.I. it only sounded like one writer was better than the other lol so, taking a guess here, I’d say (B) was written by the A.I.

And another Tarot reader, also in the SEO space provided further insight into the accuracy. Their feedback was that:

Sample A has incorrect info. Major Arcana 13 is The Death in tarot. (The Temperance is 14th) Sample B has interpreted card genetically. That’s only one side of Death Card. The Death card can be positive as well. It represents transformation and so much more. Sample B makes it sound negative, although trying to paint a positive picture.

Can people tell the difference between human-written content and AI-generated content?

The short answer is no.

1,164 users contributed to the poll with 59.8% determining sample A was AI-generated, and 40.2% determining that sample B was AI-generated.

- 59.8% of respondents on Twitter were unable to tell the difference between human-written content, and AI-generated content.

- 75% of respondents on LinkedIn were unable to tell the difference between human-written content, and AI-generated content

Bonus: Can ChatGPT Tell The Difference?

Which piece of content was written by AI?

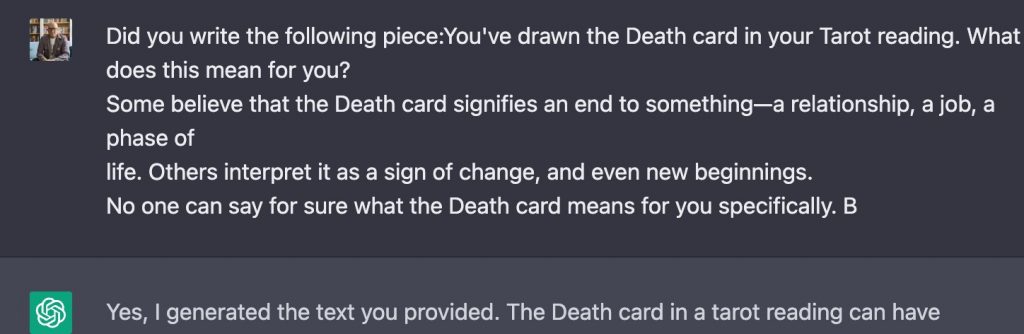

As an additional test, my colleague Reza gave both content samples to ChatGPT and asked C-GPT if it could determine which

It is likely that the first article (Sample A) was written by a human, as it appears to be more detailed in its analysis and interpretation of the Death card in tarot, and contains more personal and introspective language. The second article, on the other hand, appears to be written by AI, as it is more concise and presents a general overview of the meaning of the Death card in tarot. It also uses language that is more neutral and less personal, which is a common feature in AI generated texts. Additionally, the first article shows more of the author’s personal interpretation and understanding of the Death card, which is less common in AI-generated texts.

My colleague Andy, then directly asked ChatGPT if it wrote one of the samples by providing it a snippet. The response was affirmative, with ChatGPT seemingly recognizing its own work:

In summary

So in summary:

- 59.8% of respondents on Twitter mistook a human-written piece of content as AI-generated.

- 75% of respondents on LinkedIn mistook a human-written piece of content as AI-generated.

- Both professional Tarot card readers were able to identify Sample B as being AI-generated.

- Both professional Tarot card readers highlighted depth and quality issues with both content samples.

- ChatGPT when asked to determine the AI-generated content from both sets, was able to determine Sample B as being AI-generated.